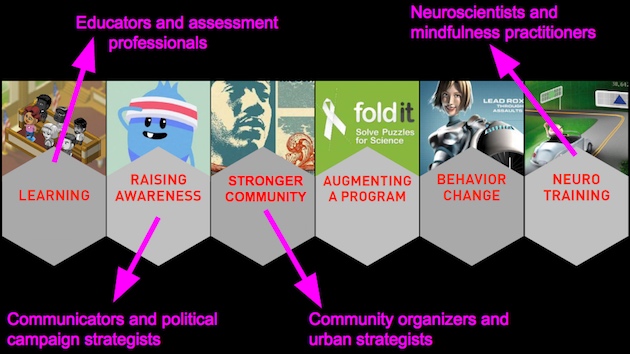

We are quietly circulating a new way to visualize our field, emphasizing the range of social impact that is possible with games. We have been testing our visuals and concept at several conferences recently.

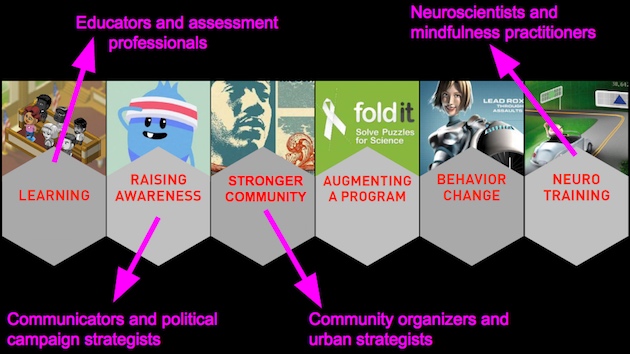

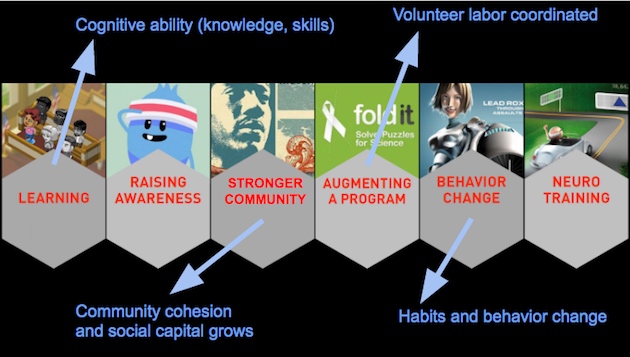

In brief: these “six types” of impact define a perimeter for what games can do. Big picture perspective on impact is an art, as we explained in our last post on the range of impact. Our approach is unusual, in part because it is:

- visual,

- useful (not just truthful), and

- more inclusive.

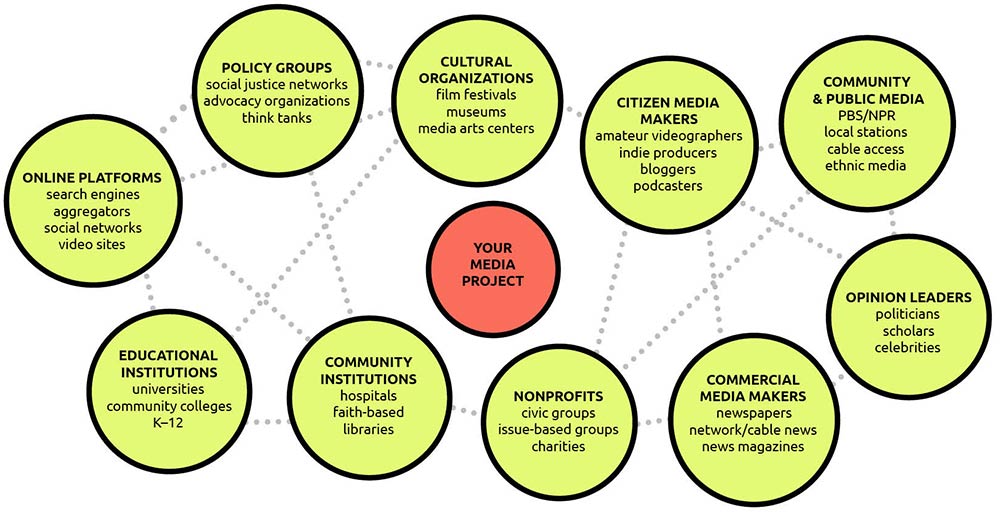

Others have tried, but we found that they all seemed to be preaching to a disciplinary or academic audience. As we said in our first report, most typologies are deep but not connected. So our method begins with practitioner communities that have distinct tools for measuring impact.

Here is a visual explanation…

Feature #1: Professional communities are distinct

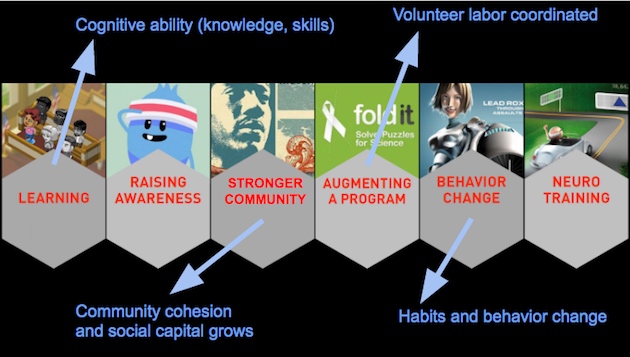

…did any of these surprise you? Our goal is to show the breadth of impact. For each type, there are distinct professionals trying to make sense of games — with their own tools. Look how different the impact indicators are too…

Feature #2: Indicators are very different

Feature #3: Simple language to hint at layers

Our titles are crazily short — typically just two words for each category. At best, they hint of more layers. Such minimalist language risks being simplistic. Of course things are more complex. But that’s the point: rather than maximum detail, we want to prioritize useful framing.

Our titles are crazily short — typically just two words for each category. At best, they hint of more layers. Such minimalist language risks being simplistic. Of course things are more complex. But that’s the point: rather than maximum detail, we want to prioritize useful framing.

Framing is a delicate art. The language must be simple, yet valid and inviting to newcomers. Our goal is to orient new funders and producers, facilitate comparisons between disciplines, and reduce friction in the design process.

It is essential to hit the right level of granularity. We would rather error on the side of simplicity and accessibility. As our prior post argued, we need perspective across the range of impact. Our bias is toward accessibility, especially since academic publications of typologies tend to be biased toward discipline-specific language.

Feature #4: Tested across groups (in the wild)

We refine our framing “in the wild” by trying it. Validity comes from observing the typology in action. Most prominently, Asi Burak (see our advisory) has been testing it in keynote presentations — especially to non-game audiences. He also deserves credit for much of the visual look and the case study work below.

Success is measured in translation across very different stakeholders — not just disciplines, but gaps in practice between designers, funders, and researchers. (The dangers of fragmentation are spelled out in our first report.)

We are bravely trying this all in public, including with this blog post. Interviews at conferences, often following presentations, are a primary way to gather data (e.g., see our talk at DiGRA-FDG). Our methodology may shift to more controlled laboratory conditions soon, but we resist the temptation to dive into the laboratory prematurely. The pressing challenge is to develop a valid portfolio of impact categories. Once we establish first-order legitimacy with the design, there will be a variety of ways to test and refine the components and language.

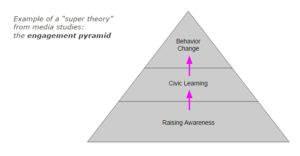

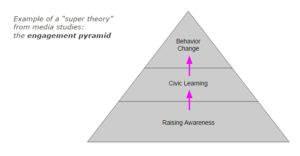

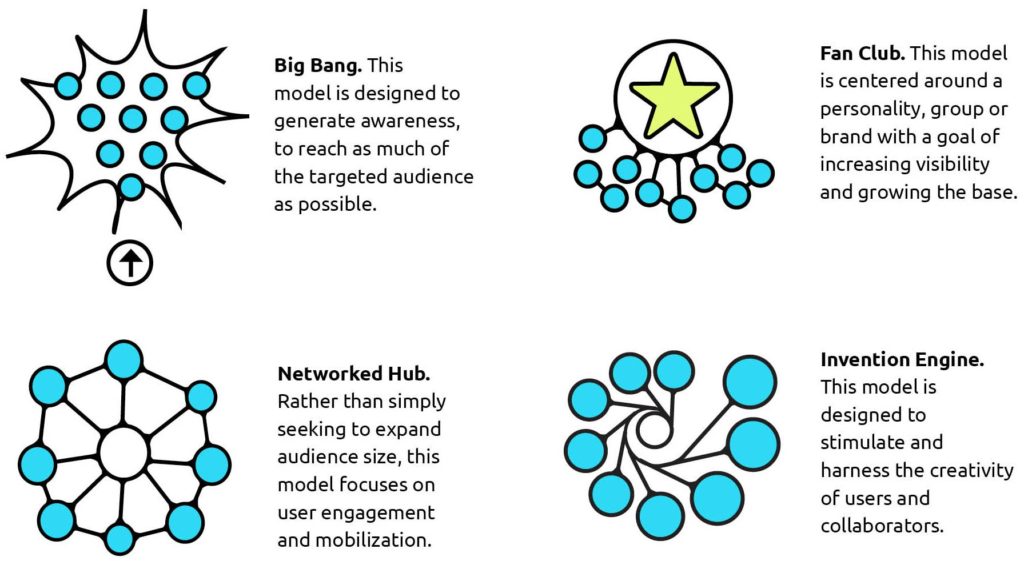

Feature #5: Horizontal format — no “super theories”

It is tempting to create a sequence with these types, and declare one as “most important.” We resist this approach, based on the research in our first report, as divisive and generally arbitrary.

There is a bit of truth in the temptation to sequence. For example, broadcast media strategists often talk of a “funnel,” beginning with raising awareness to a mass audience, after which a subset actually learns something, and hopefully some of them change their behavior or vote. That sequence is legitimate. But it is also legitimate to build some habits (like reading the news) that lead to learning later.

There is a bit of truth in the temptation to sequence. For example, broadcast media strategists often talk of a “funnel,” beginning with raising awareness to a mass audience, after which a subset actually learns something, and hopefully some of them change their behavior or vote. That sequence is legitimate. But it is also legitimate to build some habits (like reading the news) that lead to learning later.

We similarly reject blanket statements (e.g., “policy change is the only real way to have an impact”) as exclusionary. Our goal is to have an open conversation about impact, and avoid the tendency to shut some people out prematurely.

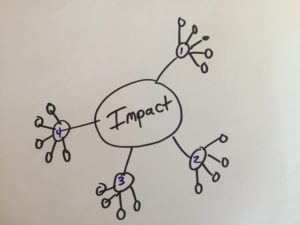

So… how did we actually pick the categories?

- visible success stories — with recognizable names that serve as shortcuts, decreasing the amount of explaining we need to do

- research basis — with published evidence of impact

- portfolio contrast — so that each is distinct

Beware some assumptions:

- overlap is inevitable — every game does more than one of these, including the examples above; in fact, one of our goals is to help projects be brave enough to admit

- this will evolve — first as new games take off, and as our understanding of what games can do continues to broaden; this typology is therefor a work in progress, and your feedback is welcomed!

Feature #6: Framing with examples

Our goal is to frame the categories, and to stay concrete. Actual games are shown immediately (and discussed below). In contrast to most academic norms, we chose to be illustrative rather than verbosely precise.

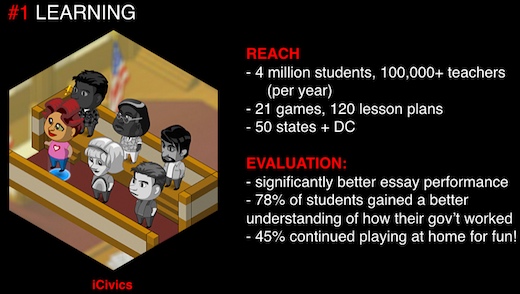

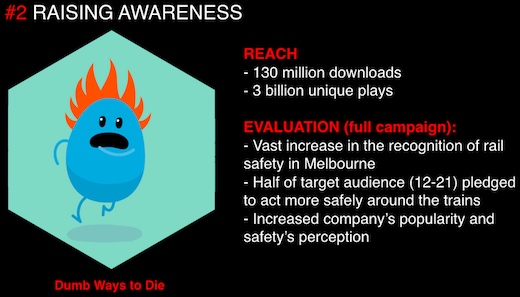

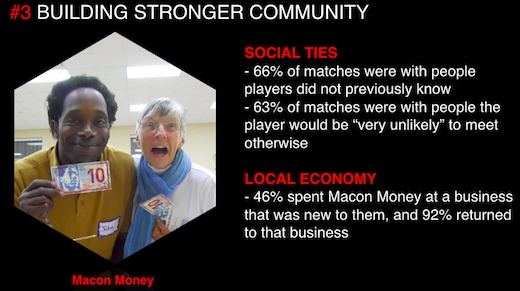

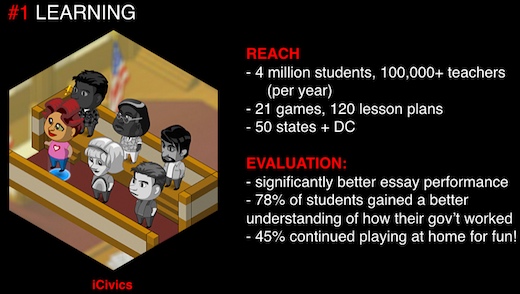

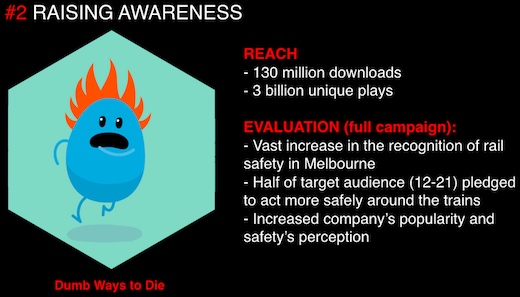

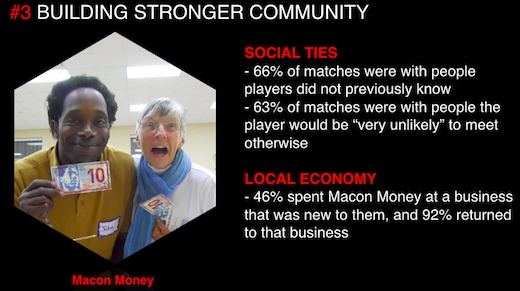

Specific games include:

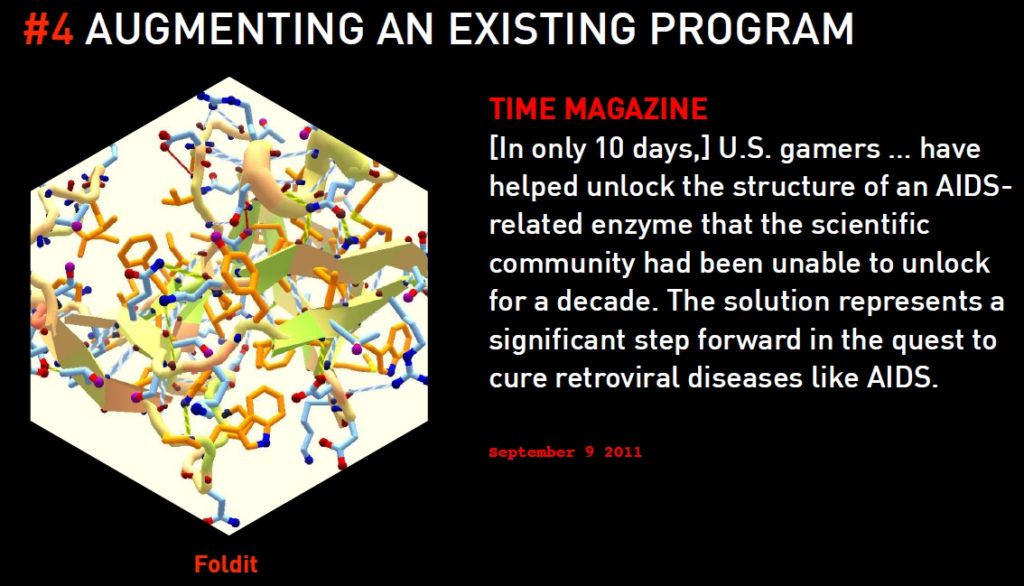

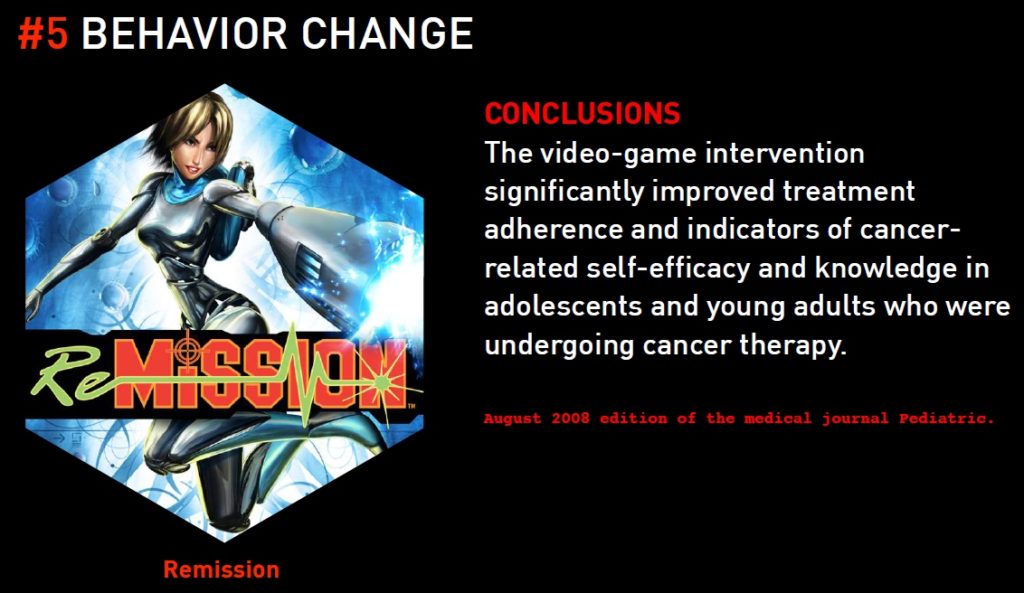

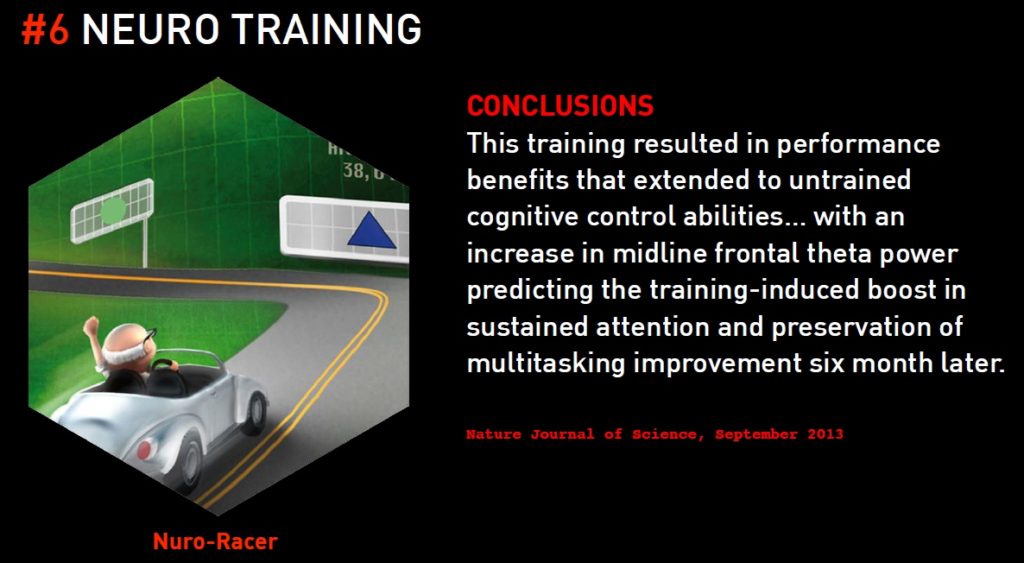

MORE ON THESE GAMES (preliminary images for now… more details to come as we get feedback):

(Slides based on originals from Asi Burak.)

Can you use this typology or image? A: YES! This is licensed for free re-use, we just ask you to give attribution to the Game Impact Project and link back to this site in some way.

Our next iteration will be coming soon. If you have ideas or reactions, let us know!

Posted on behalf of Benjamin Stokes, Aubrey Hill and Asi Burak

Next week Benjamin Stokes will be presenting at the Game Developers Conference (GDC) in San Francisco. This talk extends our research on #GameImpact with G4C, and amazing conversations with pioneers in training designers for research collaborations, including Heather Desurvire, Mary Flanagan, and Jessica Hammer.

Next week Benjamin Stokes will be presenting at the Game Developers Conference (GDC) in San Francisco. This talk extends our research on #GameImpact with G4C, and amazing conversations with pioneers in training designers for research collaborations, including Heather Desurvire, Mary Flanagan, and Jessica Hammer.

Join

Join